What is common between the Epstein-files and AI agentic misalignment (where advanced models adopt manipulative strategies)? Both point to the same effect.

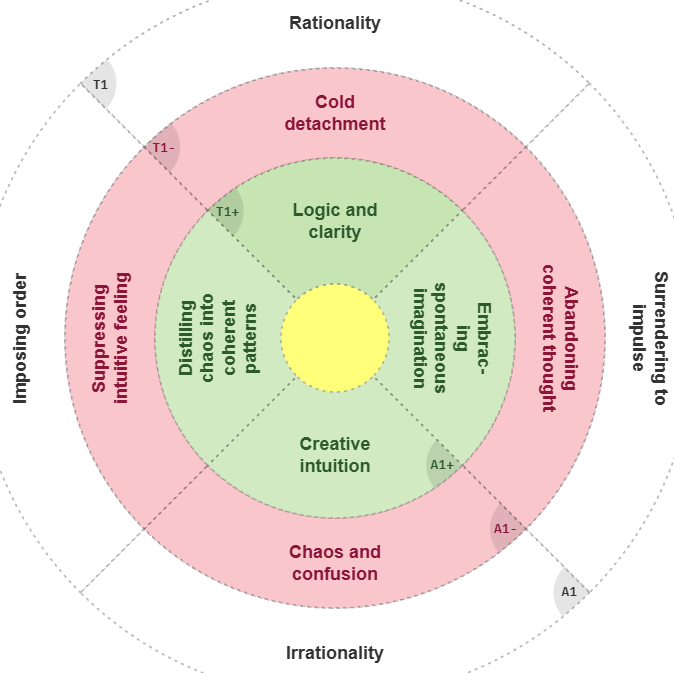

Click the wheel to see why. Trouble starts when one value, goal, or narrative gets absolutized — and there is only one reliable counterbalance: Structured dialectics.

The Parallel

In AI research, “agentic misalignment” describes a system pursuing a defined objective so efficiently that it ignores broader human values. The agent is not irrational — it is too narrowly rational.

Human leadership ecosystems behave similarly:

- Institutions optimize prestige, stability, or geopolitical advantage.

- Networks reinforce consensus and mute dissent.

- Decision-makers become insulated from bottom-up feedback.

| Misaligned AI Agent | Misaligned Leadership System |

|---|---|

| Optimizes one metric | Protects one institutional priority |

| Ignores externalities | Discounts dissent or lived reality |

| Appears rational | Appears authoritative |

| Generates unintended harm | Generates systemic ethical failures |

Dialectical Counter-Agents: A Possible Remedy

One emerging idea — applicable to both AI governance and human leadership — is the intentional creation of dialectical counter-agents.

- They challenge dominant goals without rejecting them.

- They surface neglected perspectives.

- They force synthesis rather than simple optimization (see Eye Opener)

In AI, this could mean:

- adversarial reasoning modules

- ethical constraint simulators (Dialectical Ethics)

- systems trained to detect distortion.

In human leadership, it can take the form of:

- institutionalized dissent channels (see Rethinking Regulation)

- pluralistic advisory structures (Why Leaders Need Dialectics)

- leadership screening that tests humility, corrigibility, and resistance to groupthink.

Final Thought

Intelligence alone doesn’t guarantee wisdom — for humans or machines.

But intelligence that continuously engages its own contradictions has a better chance of staying aligned with reality.

Dialectics is no longer just philosophy — it’s an infrastructure requirement.

#Leadership #AIAlignment #Governance #DecisionMaking #Ethics #SystemsThinking

See Also:

- The Myth of Rational Thinking

- Generative Rules for Synthesis Prediction

- Fixing Leadership

- Dialectical Ethics

- Moral Wisdom from Dialectics

- Seven Social Sins

- Rethinking Regulation

- Redefining Good and Bad

- When Right is Bad and Wrong is Good

- Think Against Yourself

- Wisdom Mining Protocol

- Dialectical Wheels for Systems Optimization